Difference Between Data Warehouse and Database

Does your business deal with a lot of transactions each day? Do you have years of historical data you want to analyze to improve your business? Great! Then you need a database and a data warehouse… but which data goes where?

Databases and data warehouses are both systems that store data. But they serve very different purposes. In this article, we’ll explain what they do, the key differences between them, and why using them effectively is essential for you to grow your business.

We’ll start with some high-level definitions before giving you more detailed explanations.

What is a Database?

A database stores real-time information about one particular part of your business: its main job is to process the daily transactions that your company makes, e.g., recording which items have sold. Databases handle a massive volume of simple queries very quickly.

What is a Data Warehouse?

A data warehouse is a system that pulls together data from many different sources within an organization for reporting and analysis. The reports created from complex queries within a data warehouse are used to make business decisions.

A data warehouse stores historical data about your business so that you can analyze and extract insights from it. It does not store current information, nor is it updated in real-time.

Data Warehouse vs. Database

Let’s dive into the main differences between data warehouses and databases.

Processing Types: OLAP vs OLTP

The most significant difference between databases and data warehouses is how they process data.

Databases use OnLine Transactional Processing (OLTP) to delete, insert, replace, and update large numbers of short online transactions quickly. This type of processing immediately responds to user requests, and so is used to process the day-to-day operations of a business in real-time. For example, if a user wants to reserve a hotel room using an online booking form, the process is executed with OLTP.

Data warehouses use OnLine Analytical Processing (OLAP) to analyze massive volumes of data rapidly. This process gives analysts the power to look at your data from different points of view. For example, even though your database records sales data for every minute of every day, you may just want to know the total amount sold each day. To do this, you need to collect and sum the sales data together for each day. OLAP is specifically designed to do this and using it for data warehousing 1000x faster than if you used OLTP to perform the same calculation.

Optimization

A database is optimized to update (add, modify, or delete) data with maximum speed and efficiency. Response times from databases need to be extremely quick for efficient transaction processing. The most important aspect of a database is that it records the write operation in the system; a company won’t be in business very long if its database didn’t make a record of every purchase!

Data warehouses are optimized to rapidly execute a low number of complex queries on large multi-dimensional datasets.

Data Structure

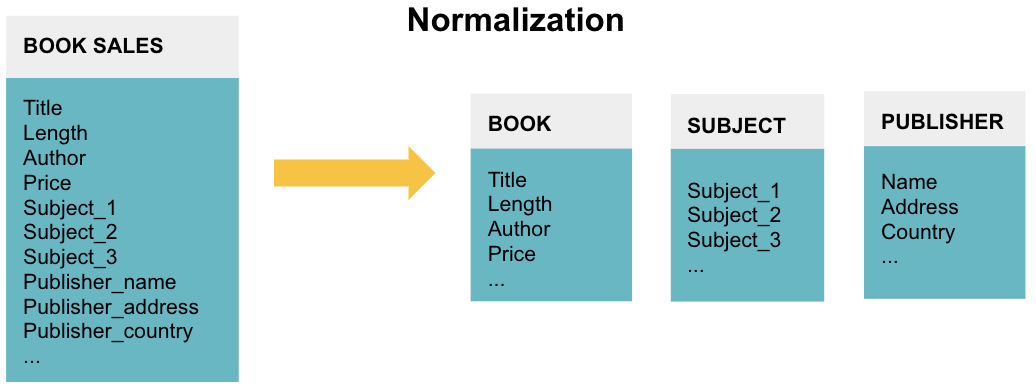

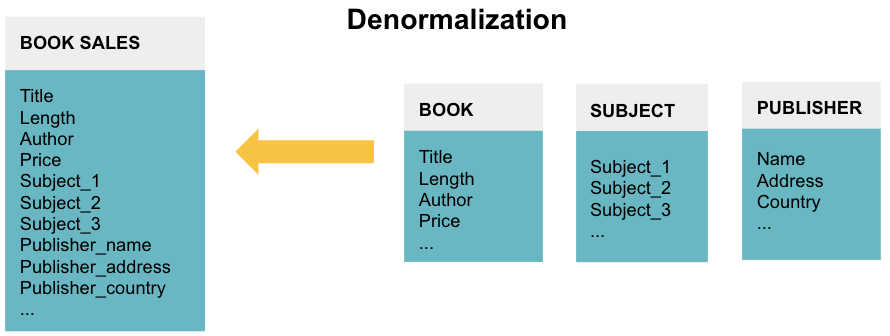

The data in databases are normalized. The goal of normalization is to reduce and even eliminate data redundancy, i.e., storing the same piece of data more than once. This reduction of duplicate data leads to increased consistency and, thus, more accurate data as the database stores it in only one place.

Normalizing data splits it into many different tables. Each table represents a separate entity of the data. For example, a database recording BOOK SALES may have three tables to denote BOOK information, the SUBJECT covered in the book, and the PUBLISHER.

Normalizing data ensures the database takes up minimal disk space and so it is memory efficient. However, it is not query efficient. Querying a normalized database can be slow and cumbersome. Since businesses want to perform complex queries on the data in their data warehouse, that data is often denormalized and contains repeated data for easier access.

Data Analysis

Databases usually just process transactions, but it is also possible to perform data analysis with them. However, in-depth exploration is challenging for both the user and computer due to the normalized data structure and the large number of table joins you need to perform. It requires a skilled developer or analyst to create and execute complex queries on a DataBase Management System (DBSM), which takes up a lot of time and computing resources. Moreover, the analysis does not go deep - the best you can get is a one-time static report as databases just give a snapshot of data at a specific time.

Data warehouses are designed to perform complex analytical queries on large multi-dimensional datasets in a straightforward manner. There is no need to learn advanced theory or how to use sophisticated DBMS software. Not only is the analysis simpler to perform, but the results are much more useful; you can dive deep and see how your data changes over time, rather than the snapshot that databases provide.

Data Timeline

Databases process the day-to-day transactions for one aspect of the business. Therefore, they typically contain current, rather than historical data about one business process.

Data warehouses are used for analytical purposes and business reporting. Data warehouses typically store historical data by integrating copies of transaction data from disparate sources. Data warehouses can also use real-time data feeds for reports that use the most current, integrated information.

Concurrent Users

Databases support thousands of concurrent users because they are updated in real-time to reflect the business’s transactions. Thus, many users need to interact with the database simultaneously without affecting its performance.

However, only one user can modify a piece of data at a time - it would be disastrous if two users overwrote the same information in different ways at the same time!

In contrast, data warehouses support a limited number of concurrent users. A data warehouse is separated from front-end applications, and using it involves writing and executing complex queries. These queries are computationally expensive, and so only a small number of people can use the system simultaneously.

ACID Compliance

Database transactions usually are executed in an ACID (Atomic, Consistent, Isolated, and Durable) compliant manner. This compliance ensures that data changes in a reliable and high-integrity way. Therefore, it can be trusted even in the event of errors or power failures. Since the database is a record of business transactions, it must record each one with the utmost integrity.

Since data warehouses focus on reading, rather than modifying, historical data from many different sources, ACID compliance is less strictly enforced. However, the top cloud providers like Redshift and Panoply do ensure that their queries are ACID compliant where possible. For instance, this is always the case when using MySQL and PostgreSQL.

Database vs. Data Warehouse SLA’s

Most SLAs for databases state that they must meet 99.99% uptime because any system failure could result in lost revenue and lawsuits.

SLAs for some really large data warehouses often have downtime built in to accommodate periodic uploads of new data. This is less common for modern data warehousing.

Database Use Cases

Databases process the day-to-day transactions in an organization. Some examples of database applications include:

- An e-commerce website creating an order for a product it has sold

- An airline using an online booking system

- A hospital registering a patient

- A bank adding an ATM withdrawal transaction to an account

Data Warehouse Use Cases

Data warehouses provide high-level reporting and analysis that empower businesses to make more informed business. Use cases include:

- Segmenting customers into different groups based on their past purchases to provide them with more tailored content

- Predicting customer churn using the last ten years of sales data

- Creating demand and sales forecasts to decide which areas to focus on next quarter

Database vs. Data Warehouse Comparison

| Property | Database | Data Warehouse |

| Processing Method | OnLine Transaction Processing (OLTP) | OnLine Analytical Processing (OLAP) |

| Optimization | Deletes, inserts, replaces and updates large numbers of short online transactions quickly. | Rapidly analyze massive volumes of data and provide different viewpoints for analysts. |

| Data structure | Highly normalized data structure with many different tables containing no redundant data. Thus, data is more accurate but slow to retrieve. |

Denormalized data structure with few tables containing repeat data. Thus, data is potentially less accurate but fast to retrieve. |

| Data timeline | Current, real-time data for one part of the business | Historical data for all parts of the business |

| Data analysis | Analysis is slow and painful due to the large number of table joins needed and the small time frame of data available. | Analysis is fast and easy due to the small number of table joins needed and the extensive time frame of data available. |

| Concurrent users | Thousands of concurrent users supported. However, only one user can modify each piece of data at a time. |

Small number of concurrent users. |

| ACID compliance | Records data in an ACID-compliant manner to ensure the highest levels of integrity. | Not always ACID-compliant though some companies do offer it. |

| Uptime | 99.99% uptime | Downtime is built-in to accommodate periodic uploads of new data |

| Storage | Limited to a single data source from a particular business function | All data sources from all business functions |

| Query type | Simple transactional queries | Complex queries for in-depth analysis |

| Data summary | Highly granular and precise | As granular and precise as you want it to be |

Now you understand the difference between a database and a data warehouse and when to use which one. Your business needs both an effective database and data warehouse solution to truly succeed in today’s economy.

Panoply is a secure place to store, sync, and access all your business data. Panoply can be set up in minutes, requires minimal on-going maintenance, and provides online support, including access to experienced data architects. Free 21-Day Proof of Value.

Visit Panoply online

Visit Panoply online