ETL Tools: Compare the Best Cloud-Based & Open Source Tools

There are a broad variety of ETL tools available, each with its own advantages and drawbacks. Gaining an understanding of these differences can help you choose the best ETL tool for your needs.

Background

Modern companies receive data from multiple sources, in many different formats, with the volume of data being unprecedented. Making sense of this data, finding patterns, and identifying actionable insights has become more complex, and this is where the Extract, Transform, and Load (ETL) process, and specifically ETL tools, can add tremendous value.

ETL is the process of extracting data from different sources, transforming this data so that is standardized and useable across the organization, and loading this data to a data warehouse where it can be queried and used for various Business Intelligence (BI) purposes.

ETL tools are critical when it comes to the ETL process. While some companies prefer to manually code an ETL process from start to finish, this can result in tremendous inefficiencies and frustration, along with excessive use of resources including time and budgets. Advantages of this approach include having a fully customized solution, but often maintaining and scaling this mean that the drawbacks outweigh the benefits. Again, many companies do choose to build their own ETL process, generally using Python, and there will be more about this in future posts.

The benefits of ETL tools include:

-

Scalability: of course hand-coding and managing the ETL process can be beneficial in the short-term, but as data sources, volumes, and other complexities increase, scaling and managing this becomes increasingly difficult. ETL tools, especially cloud-based ETL tools, remove this obstacle as they scale as your needs grow.

-

All In One Place Simplicity: A combination of having some of the process onsite, other parts remote and some in the cloud, can become a nightmare to integrate. With cloud-based ETL tools, one tool can be used to manage the entire process, reducing extra layers of dependencies.

-

Real-time: Building a real-time ETL process manually, especially while not disrupting business operations, is a challenge. With ETL tools handling this for you, having real-time data at your fingertips, from sources throughout the organization, becomes a lot easier.

-

Maintenance: Instead of your development team constantly fixing bugs and errors, making use of ETL tools means that maintenance is handled automatically, as patches and updates propagate seamlessly without your intervention. ETL testing tools can also be used to ensure data completeness, accuracy and integrity.

-

Compliance: storing and using data is not the wild west that it used to be. With often complex legislation like GDPR and HIPAA in place, ETL tools can ensure that you’re on the right side of compliance.

We’ll do an ETL tools comparison to look at some of the best ETL tools out there to ensure your business is optimized.

Top ETL Tools

There are many options when choosing the best ETL tools for your requirements. In this post we’ll primarily look at cloud-based ETL tools and open source ETL tools.

Cloud-based ETL tools

Cloud-based ETL tools offer real-time, streaming data processing, scalability and integrations with a constantly growing number of data sources. These are some of the more popular ETL tools:

1. Fivetran

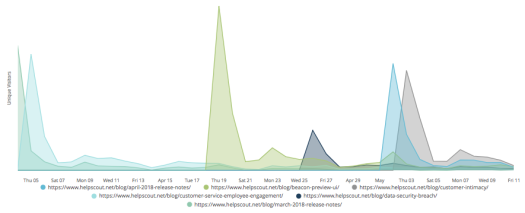

Image source: https://mattmazur.com/2018/05/11/looker-daily-uniques/

Fivetran will quickly replicate all your business data to your data warehouse, without the need for maintenance, configuration, or data pipelines. Connect anything from Facebook ads to Zendesk, without having to write tons of code, and provides for ELT transformation.

Advantages: Quick and easy setup; lets you store all your data yourself, so you never lose access to information even if you stop using a source application; allows for up-to-date analytics; full historical sync when connecting a data source (so you can even query deleted data); excellent support

Disadvantages: Lack of detailed logging and progress reporting; monitoring only

Pricing: Pricing available on request

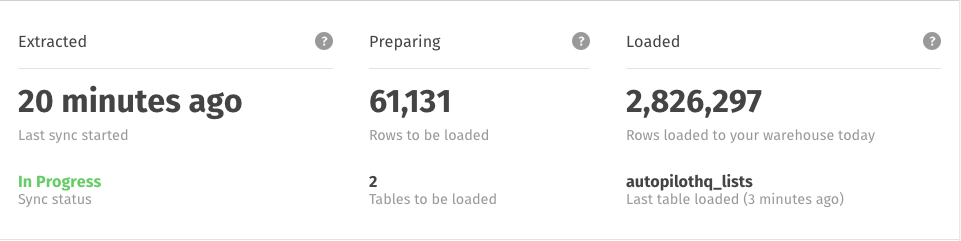

2. Blendo

Image source: https://www.facebook.com/blendo.co/photos/rpp.1551560608500332/2063211434001911/?type=3&theater

Blendo enables you to integrate your data in minutes, with no maintenance, no coding required, and no ETL scripts. It is built especially for more non-technical users, and allows you to collect data from any cloud service, load it into your data warehouse, and it optimizes your data according to your data warehouse. You can choose how often you want to pull data from your source, and monitor your usage.

Advantages: Good customer support; popular for integrating data from Xero accounting software; quick setup

Disadvantages: Refreshes every 15 minutes; does not show progress of first-time import

Pricing: From $125 per month for the standard package to $1,000 per month for the advanced package

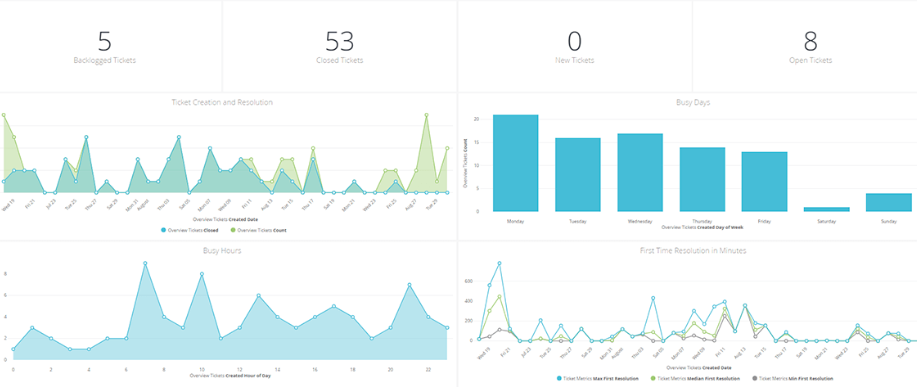

3. Stitch

Image source: https://www.stitchdata.com/docs/getting-started

Stitch describe themselves as a “cloud-first, developer-focused platform for rapidly moving data.” Stitch, which is built on open source Singer, supports the integration of data from a wide variety of sources, and their offering includes free historical data from your database and SaaS tools, selective replication, multiple user accounts and integrates with many data warehouses and analysis tools. Getting started is easy with self-serve and freemium options.

Advantages: Generous free tier; powerful performance;

Disadvantages: UI takes a while to get used to

Pricing: From $100 per month to $1,000 per month, also includes a free plan (including 5 million rows per month and selected free integrations)

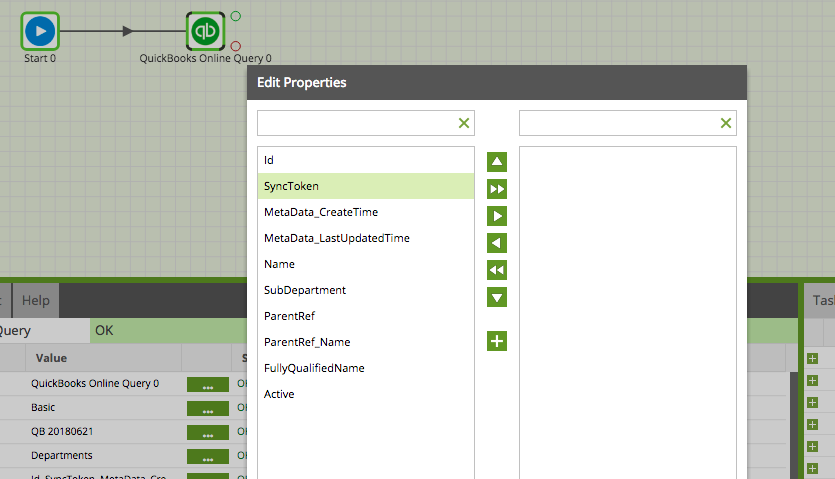

4. Matillion

Image source: https://www.matillion.com/blog/redshift/quickbooks-query-in-matillion-etl-for-amazon-redshift/

Matillion is purpose-built for Google BigQuery and Amazon Redshift, and allows you to integrate with a number of sources. It has many Amazon integrations specifically, so if your organization is already using Amazon products, this could be a good addition, but does tie you down somewhat to a specific vendor.

Advantages: Large selection of pre-built connectors; good integration with Amazon

Disadvantages: May need extra coding; no on-premises installation option; complex billing; error handling not built-in

Pricing: Pricing on Matillion ETL is dependent on instance size, from “Medium” at $1.37 per hour, to “XLarge” at $5.48 per hour.

5. SnapLogic

Image source: https://docs-snaplogic.atlassian.net/wiki/spaces/SD/pages/1438521/SmartLink

SnapLogic is a platform to integrate applications and data, allowing you to quickly connect apps and data sources. The company is also branching out into connecting and integrating data from IoT devices.

Advantages: Includes many built-in integrations, and easy tracking of feeds into a system

Disadvantages: Can take time to understand how the platform works; error handling not built-in

Pricing: Available on request

Open Source ETL Tools

Open source ETL tools can be a low-cost alternative to commercial ETL solutions. Open source ETL tools are tried and tested, and most are kept up-to-date by a community invested in their success. Most open source ETL tools will not work for organizations’ specific needs out of the box, but will require custom coding and integrations.

1. Apache Airflow

Apache Airflow (currently in “incubator” status, meaning that is is not yet endorsed by the Apache Software Foundation) is a workflow automation and scheduling system. It can be used to build a data pipeline to populate a data warehouse and (with some coding) can be used to develop reusable and parameterizable ETL processes. While it is used in the ETL process, Airflow is not an interactive ETL tool.

2. Apache Kafka

Apache Kafka is an open-source stream-processing software platform developed by the Apache Software Foundation. Kafka enables stream processing using the Kafka Streams API, whereby the stream processor receives one record at a time, processes it, and can produce one or more output records for downstream processors. The data is then loaded onto the target system. Kafka is based around four APIs: the Producer API, the Consumer API, the Streams API, and the Connector API.

3. Apache NiFi

Apache NiFi is designed to automate the flow of data between software systems. It features a web-based user interface and is highly configurable. It is known for its security options, data provenance and extensibility. While it can form part of an ETL solution, it is not in and of itself an interactive ETL tool.

Selecting The Right ETL Tool For You

Selecting the right tool for your requirements depends on a number of factors, including:

-

Your data needs. If you don’t need real-time updates, or if the volume of data you’re currently processing (and expecting to process in future) is relatively small, then ETL tools that make improvements to your current ETL process are probably a better option than more comprehensive, end-to-end ETL tools. Similarly, if you are regularly processing petabytes of data, then ETL tools for big data should be looked into. Something else to bear in mind is that if having the latest, real-time data is critical to your business operations, the reliance on one comprehensive ETL tool, especially a more lightweight one, is a major risk should it stop functioning, either partially or completely.

-

Complexity. If you’re pulling data from a small number of sources, you will have very different needs than an organization pulling data from an ever-increasing number of sources. One thing to note is that the pace of data inflow is not slowing down any time soon, and new business tools/data sources are constantly popping up; meaning that while a decision based on your organization’s needs today might point to a lightweight or inexpensive solution, this could cost a lot more in the long run. The ideal tool is scalable, so that today’s business needs are taken care of, and future integrations are seamless and simple instead of requiring custom solutions.

-

Familiarity. A lot depends with what your team is comfortable with, and what technologies and architecture have already been integrated into your business processes. For example, if your organization is already heavily reliant upon Amazon services, it would make sense to use tools that easily integrate with that ecosystem, such as ETL tools that have built-in integrations with Amazon Redshift.

-

Budget. While some open-source products may be missing some key features that are offered with paid ETL tools, they often have proven reliability, vibrant communities and extensive documentation. On the flip side, when it comes to crunch time and you need support urgently, you might wish for the safety of a commercial option, and it may be more difficult and time-consuming to customize these for your organization. Keep in mind that often, the pricing model is more important than the product’s price tag. For example, some ETL tools set prices based on the amount of data processed, and offer really good and cheap-sounding prices to get started with. However as your needs grow and your processing increases, this amount is likely to climb exponentially, and you may find yourself locked in to a specific tool or vendor, with the costs of switching or starting from scratch prohibitive.

-

Your business goals. If end-users are not tech savvy, they will need to be spoon-fed information, often in a visually appealing way. Some ETL tools are powerful and easy to set up, but require some technical knowledge in order to get the best out of them, or have UI that is clunky and intimidating for non-technical users. Conversely, having a great looking dashboard that takes ages to update and requires constant attention from engineers is also unlikely to be popular.

All the Benefits Without ETL

There is a way to get the benefits of collecting your data from various sources without the full ETL process: ELT (Extract, Transform, Load), whereby extracted data is immediately loaded, and all data from all data sources is moved into a single, centralized data repository. Leveraging the latest infrastructure technologies and the cloud, systems can now support large storage and scalable computing. With ELT, all data is already loaded and can be used at any time.

For example, Panoply’s cloud data platform has end-to-end data management built in. It automatically extracts data from a variety of sources, stores it in a secure data warehouse, and makes it available to popular BI and analytical tools.

Simplify and Turbocharge Your Data

There are many ETL tools available, each with its own advantages, disadvantages and quirks.

For the ultimate and only tool you’ll need, look no further than Panoply’s cloud data platform. With Panoply you can automate data ingestion and make tables clear, configurable, and immediately queryable.

Seamlessly connect to any BI tool and start visualizing, analyzing, and sharing data insights in minutes: https://panoply.io/platform/

Visit Panoply online

Visit Panoply online